Playing around with the URL Inspection API

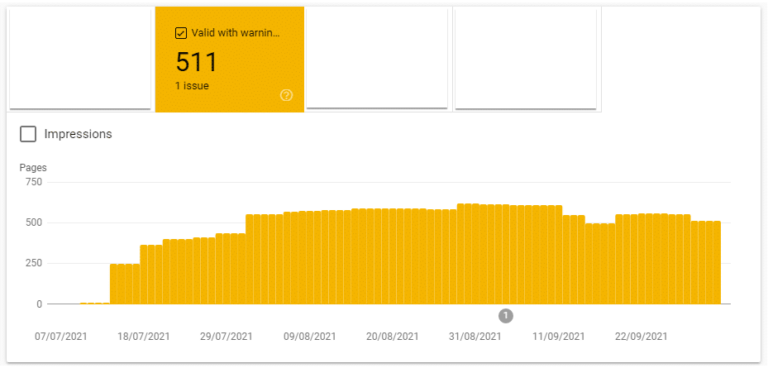

A key problem is monitoring the crawling and indexing of important pages on any website.

For the last few months, I’ve teamed up with a senior developer to play around with the URL Inspection API. Our goal was to see if we could create an indexing monitoring tool by storing the daily fetch requests from the URL Inspection API.

We did it. This blog post is a few notes and lessons learned from the data.